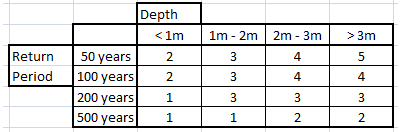

As previously discussed, risk scoring is a powerful way to evaluate risk by including disparate and complex datasets, business rules, and experience — all prioritized and weighted — in an algorithm. While it’s not a new idea, it is underutilized. Therefore, I think it's worth looking at a few examples of how it's done. (Since it’s a vast subject, this post will concentrate on flood risk scoring.)

Topics: Insurance Underwriting, Risk Management, Flood Risk, Risk Scoring

Plug The Leak: Reduce Underwriting Leakage With Better Data

Posted by Ivan P. Maddox on Jun 19, 2018 4:03:34 PM

Not only is insurance an industry that is based on a general inability to predict what is going to happen, it is a hyper-competitive industry in which the winners are those who can best predict the unknowable…or at least be less wrong than their competition. Insurance underwriting is the actual process of pricing what is unknowable, and is necessarily performed with rigorous processes underpinned with vast amounts of data. However, underwriting is never perfect, and the gap between actual underwriting and perfection is called underwriting leakage.

Topics: Insurance Underwriting, Risk Management, Insurance Protection Gap

If everyone uses the same risk model, is it still useful?

Posted by Ivan Maddox on Apr 4, 2018 8:03:01 AM

One of the recurrent themes of this blog is to explore the usefulness and limitations of risk models. This post explores the implications of the widespread — in some cases, universal — use of these models. Is there a limit to a model’s usefulness if everyone is using it? How can a model’s limitations be overcome?

When a peril is well modeled, and that model is comprehensively applied throughout a market by both the carriers and re-insurers, it becomes very difficult to differentiate coverage because everyone has priced the risk similarly. The implications of this blanket usage begin to manifest when nothing happens for a while; i.e., when no significant catastrophe fulfills the model’s predictions. The capacity to cover the expected loss is collected by everyone, and with no claims to release the capital, the market gets soft. Competition becomes tighter, and it becomes necessary to look for new markets, or entirely new activities, to maintain a constant level of premium.

This recent article from Intelligent Insurer explores this phenomenon in the current reinsurance market. The big boys are moving to specialty reinsurance and even primary insurance amid a very soft market. Naïve capital accumulates and the only outlet is a catastrophe that is unexpected — i.e., unmodeled – to release the excess capacity through claims that exceed predictions.

Topics: Insurance Underwriting, Risk Management, Other Risk Models

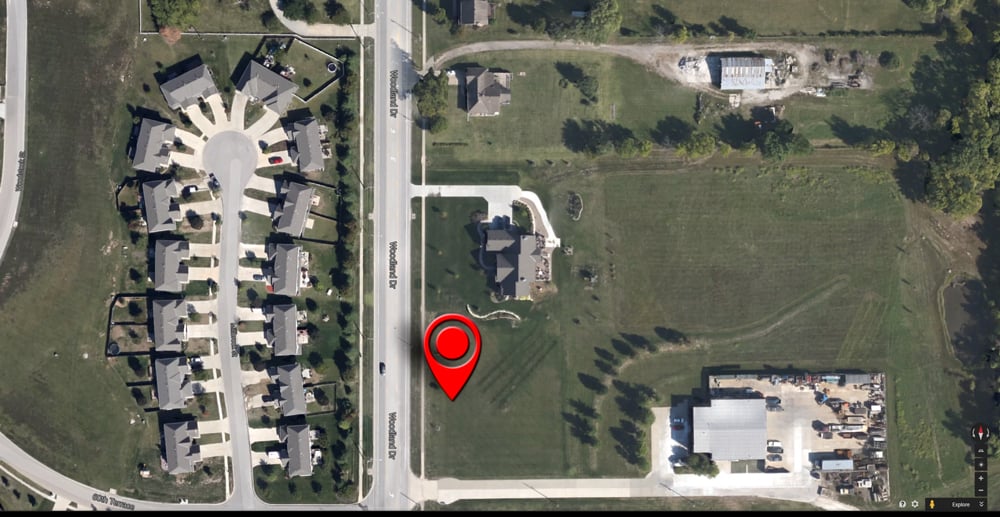

As risk assessments get better and better, the limiting factor on how well they work is almost always geocoding. A long time ago, we took a look at geocoding for underwriting, which discussed how important it is. Well, everything in early 2015 that was true and valid remains true and valid.

What would an underwriter say if they heard that a monumental amount of unwritten premium was about to become available in a few months? This is something property underwriters are contemplating as the NFIP re-authorization approaches. But is that the right question?

Topics: Insurance Underwriting, Underwriting Profit, Effective Underwriting